I’m trying to keep up my jaundiced eye here, but I feel like tonight I have seen a Democratic party unlike any I have seen before in my lifetime. Walter Mondale was perhaps the last of the old guard still to possess some fight, but after that, not Dukakis, or Clinton, or Al Gore or John Kerry. They all seemed too timid, too poll tested, too cowed. First last night in Joe Biden’s speech and then again tonight in Barack Obama’s I heard a Democratic party unbowed, spirited, confident.

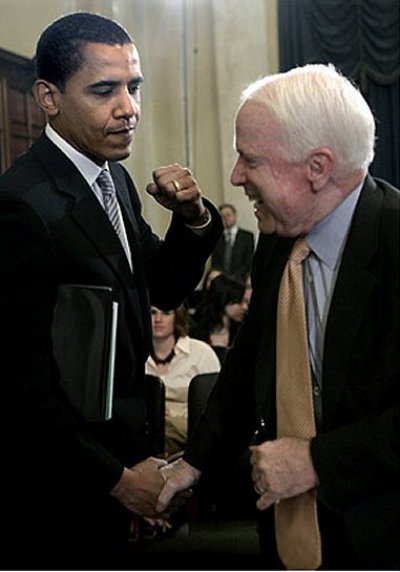

Senator Biden’s introduction by his son and his own discussion of his family was surprisingly emotional and seemingly so for everyone involved. His speech was the version of values that Democrats should be putting forward, it was tough on foreign policy, and unlike Democrats for the last eight years, effortlessly sincere, uncontrived. As Matthew Yglesias pointed out (“It’s Biden,” ThinkProgress, 23 August 2008), the selection of Biden for VP “signals as desire to take the argument to John McCain on national security policy” and deliver to voters “a full-spectrum debate about the issues facing the country rather than a positional battle in which one party talks about the economy and the other talks about national security.” In Joseph Biden I think I first, finally saw a different, rejuvenated Democrats.

The same was true for Barack Obama’s speech tonight. His cadence was off in places, but it was defiant, pugilistic and signaled to me that the Senator has absorbed all the right lessons about the campaign. I think many of the myths that have plagued the Senator as well as the party at large for the last few weeks have been definitively left behind after tonight. It showed some of the populism that worked so well for Al Gore in the final weeks of the 2000 election. My favorite part, like with Senator Biden, was when Senator Obama took the foreign policy issue by the horns:

You don’t defeat — you don’t defeat a terrorist network that operates in 80 countries by occupying Iraq. You don’t protect Israel and deter Iran just by talking tough in Washington. You can’t truly stand up for Georgia when you’ve strained our oldest alliances.

If John McCain wants to follow George Bush with more tough talk and bad strategy, that is his choice, but that is not the change that America needs.

If Chris Matthews waxing rapturous is any indication, then he achieved everything he needed to do. After Chris Matthews, what more can you ask for? Who knows, maybe even Maureen Dowd will write a positive review. I think McCain’s speech a week from now will look pretty wooden in comparison.

My only concern is as, I think it was Patrick Buchanan said last night, after a week of the Republicans ripping into Senator Obama next week, the Democrats may regret going so easy on Senator McCain. Alternately, Democrats may finally have learned that you have to run your negative stuff stealth.