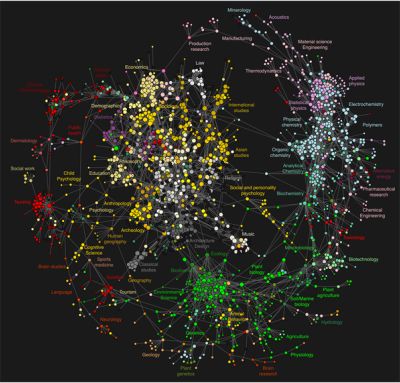

Knowledge is a network phenomenon. Only primitive knowledge consists of non-systematized catalogues of facts. System is the highest state of knowledge. Right now the system of our knowledge might be said to be clumpy, with well developed disciplines, but tenuous connections between them. Knowledge is still subject to cluster analysis. The apogee of knowledge will be a system of complete propositional consistency.

I present here a selection of discussions of the network nature of knowledge:

Kevin Kelly (“The Fifth and Sixth Discontinuity,” The Technium, 15 June 2009):

We casually talk about the “discovery of America” in 1492, or the “discovery of gorillas” in 1856, or the “discovery of vaccines” in 1796. Yet vaccines, gorillas and America were not unknown before their “discovery.” Native peoples had been living in the Americas for 10,000 years before Columbus arrived and they had explored the continent far better than any European ever could. Certain West African tribes were intimately familiar the gorilla, and many more primate species yet to be “discovered.” Dairy farmers had long been aware of the protective power of vaccines that related diseases offered, although they did not have a name for it. The same argument can be made about whole libraries worth of knowledge — herbal wisdom, traditional practices, spiritual insights — that are “discovered” by the educated but only after having been long known by native and folk peoples. These supposed “discoveries” seems imperialistic and condescending, and often are.

Yet there is one legitimate way in which we can claim that Columbus discovered America, and the French-American explorer Paul du Chaillu discovered gorillas, and Edward Jenner discovered vaccines. They “discovered” previously locally known knowledge by adding it to the growing pool of structured global knowledge. Nowadays we would call that accumulating structured knowledge science. Until du Chaillu’s adventures in Gabon any knowledge about gorillas was extremely parochial; the local tribes’ vast natural knowledge about these primates was not integrated into all that science knew about all other animals. Information about “gorillas” remained outside of the structured known. In fact, until zoologists got their hands on Paul du Chaillu’s specimens, gorillas were scientifically considered to be a mythical creature similar to Big Foot, seen only by uneducated, gullible natives. Du Chaillu’s “discovery” was actually science’s discovery. The meager anatomical information contained in the killed animals was fitted into the vetted system of zoology. Once their existence was “known,” essential information about the gorilla’s behavior and natural history could be annexed. In the same way, local farmers’ knowledge about how cowpox could inoculate against small pox remained local knowledge and was not connected to the rest of what was known about medicine. The remedy therefore remained isolated. When Jenner “discovered” the effect, he took what was known locally, and linked its effect into to medical theory and all the little science knew of infection and germs. He did not so much “discover” vaccines as much as he “linked in” vaccines. Likewise America. Columbus’s encounter put America on the map of the globe, linking it to the rest of the known world, integrating its own inherent body of knowledge into the slowly accumulating, unified body of verified knowledge. Columbus joined two large continents of knowledge into a growing global consilience.

The reason science absorbs local knowledge and not the other way around is because science is a machine we have invented to connect information. It is built to integrate new knowledge with the web of the old. If a new insight is presented with too many “facts” that don’t fit into what is already known, then the new knowledge is rejected until those facts can be explained. A new theory does not need to have every unexpected detail explained (and rarely does) but it must be woven to some satisfaction into the established order. Every strand of conjecture, assumption, observation is subject to scrutiny, testing, skepticism and verification. Piece by piece consilience is built.

Pierre Bayard (How to Talk About Books You Haven’t Read [New York: Bloomsbury, 2007]):

As cultivated people know (and, to their misfortune, uncultivated people do not), culture is above all a matter of orientation. Being cultivated is a matter not of having read any book in particular, but of being able to find your bearings within books as a system, which requires you to know that they form a system and to be able to locate each element in relation to the others. …

Most statements about a book are not about the book itself, despite appearances, but about the larger set of books on which our culture depends at that moment. It is that set, which I shall henceforth refer to as the collective library, that truly matters, since it is our mastery of this collective library that is at stake in all discussions about books. But this mastery is a command of relations, not of any book in isolation …

The idea of overall perspective has implications for more than just situating a book within the collective library; it is equally relevant to the task of situating each passage within a book. (pgs. 10-11, 12, 14)

And I might add that it is not only passages within a book, but passages between books. Books, passages, paragraphs, et cetera are all stand-ins for or not-quite-there-yet stabs at the notion of memes. It is the relation of memes that is critical and books or passages therefrom are proxies or meme carriers. A book is a bundle of memes. And those memes bear a certain set of relations to all the other memes bundled in all the other books (or magazines, memos, blog posts, radio broadcasts, conversations, thoughts, or any of the other carriers of memes).

This brings us to the ur-theory of them all, W.V.O. Quine’s thumb-nail sketch epistemology from “Two Dogmas of Empiricism,” (The Philosophical Review vol. 60, 1951, pp. 20-43; Reprinted in From a Logical Point of View [Cambridge, Mass.: Harvard University Press, 1953; second, revised, edition 1961]):

The totality of our so-called knowledge or beliefs, from the most casual matters of geography and history to the profoundest laws of atomic physics or even of pure mathematics and logic, is a man-made fabric which impinges on experience only along the edges. Or, to change the figure, total science is like a field of force whose boundary conditions are experience. A conflict with experience at the periphery occasions readjustments in the interior of the field. Truth values have to be redistributed over some of our statements. Reevaluation of some statements entails re-evaluation of others, because of their logical interconnections — the logical laws being in turn simply certain further statements of the system, certain further elements of the field. Having reevaluated one statement we must reevaluate some others, whether they be statements logically connected with the first or whether they be the statements of logical connections themselves. But the total field is so undetermined by its boundary conditions, experience, that there is much latitude of choice as to what statements to reevaluate in the light of any single contrary experience. No particular experiences are linked with any particular statements in the interior of the field, except indirectly through considerations of equilibrium affecting the field as a whole.

If this view is right, it is misleading to speak of the empirical content of an individual statement — especially if it be a statement at all remote from the experiential periphery of the field. Furthermore it becomes folly to seek a boundary between synthetic statements, which hold contingently on experience, and analytic statements which hold come what may. Any statement can be held true come what may, if we make drastic enough adjustments elsewhere in the system. Even a statement very close to the periphery can be held true in the face of recalcitrant experience by pleading hallucination or by amending certain statements of the kind called logical laws. Conversely, by the same token, no statement is immune to revision. Revision even of the logical law of the excluded middle has been proposed as a means of simplifying quantum mechanics; and what difference is there in principle between such a shift and the shift whereby Kepler superseded Ptolemy, or Einstein Newton, or Darwin Aristotle?

For vividness I have been speaking in terms of varying distances from a sensory periphery. Let me try now to clarify this notion without metaphor. Certain statements, though about physical objects and not sense experience, seem peculiarly germane to sense experience — and in a selective way: some statements to some experiences, others to others. Such statements, especially germane to particular experiences, I picture as near the periphery. But in this relation of “germaneness” I envisage nothing more than a loose association reflecting the relative likelihood, in practice, of our choosing one statement rather than another for revision in the event of recalcitrant experience. For example, we can imagine recalcitrant experiences to which we would surely be inclined to accommodate our system by reevaluating just the statement that there are brick houses on Elm Street, together with related statements on the same topic. We can imagine other recalcitrant experiences to which we would be inclined to accommodate our system by reevaluating just the statement that there are no centaurs, along with kindred statements. A recalcitrant experience can, I have already urged, be accommodated by any of various alternative reevaluations in various alternative quarters of the total system; but, in the cases which we are now imagining, our natural tendency to disturb the total system as little as possible would lead us to focus our revisions upon these specific statements concerning brick houses or centaurs. These statements are felt, therefore, to have a sharper empirical reference than highly theoretical statements of physics or logic or ontology. The latter statements may be thought of as relatively centrally located within the total network, meaning merely that little preferential connection with any particular sense data obtrudes itself.

As an empiricist I continue to think of the conceptual scheme of science as a tool, ultimately, for predicting future experience in the light of past experience. Physical objects are conceptually imported into the situation as convenient intermediaries — not by definition in terms of experience, but simply as irreducible posits comparable, epistemologically, to the gods of Homer. Let me interject that for my part I do, qua lay physicist, believe in physical objects and not in Homer’s gods; and I consider it a scientific error to believe otherwise. But in point of epistemological footing the physical objects and the gods differ only in degree and not in kind. Both sorts of entities enter our conception only as cultural posits. The myth of physical objects is epistemologically superior to most in that it has proved more efficacious than other myths as a device for working a manageable structure into the flux of experience.

Image from Bollen, Johan, Herbert Van de Sompel, Aric Hagberg, Luis Bettencourt, Ryan Chute, et al., “Clickstream Data Yields High-Resolution Maps of Science,” Public Library of Science One, vol. 4, no. 3, March 2009, e4803, doi:10.1371/journal.pone.0004803. See article for a larger version.