It’s one thing to recognize that the properties of the component aren’t those of the composite. But on the positive side and in the interstitiary of this seemingly commonplace observation lies an entire world. The fallacy of composition is perhaps the first and the most important recognition on the way to systems science. Characteristics don’t scale in a straightforward or linear way and emergent properties, complex systems, adaptation and evolution, ecology, intelligence and society1 are all the positive side of the recognition of the fallacy of composition.

I mention the notion of an interstitiary between components and composites because this distinction is often thought of as discreet and binary. That is, people imagine components and composites and no intermediate states. This is because when people think about the fallacy of composition, they tend think of a machine-type example, or one of substance and form, e.g. just because an ingot of metal is pretty durable doesn’t mean that a precision machine made of metal parts is too. But emergence-type phenomenon are a unique kind of composition not captured in the machine analogy of component and composition.

A machine is made of heterogeneous parts and that is misleading here. Each part is relatively property-thin and by themselves are just a collection of odd shapes. It is only when completely assembled that the function of the whole is manifest. Structure is everything in the machine. Intermediate states between parts strewn on the table and complete assembly accomplish nothing. Minor deviations from proscribed structure accomplish nothing. And the whole is efficient: that is, it is specifically designed to minimize emergent or any other extraneous characteristics. Design and emergence are at odds here.2

Emergence, complex systems, et cetera come about from collections of more or less homogeneous corpuscles interacting in a largely unstructured way.3 The major difference here is that while the machine has no or few remarkable intermediary states, scale is all-important in systems phenomena, which tend to display differing and unique behavior at various scales. And where the machine is highly structure sensitive, complex systems tend to be robust in their various states.4

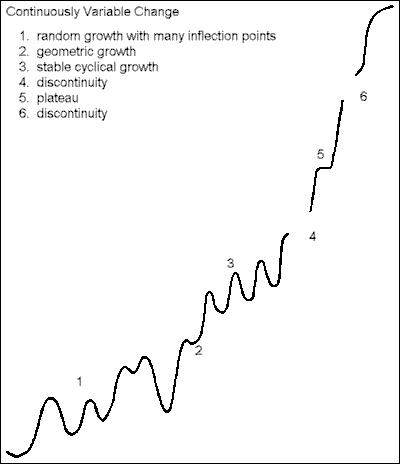

The key is that more is different (nearly the motto of the discipline).5 The common sense imagining, prior to the fallacy of composition, is that things scale in a linear way. More means more of the same. Then there is second order sophistication about scale. People add in the notion of a discontinuity. Things scale until they reach a certain threshold, at which point there is a discontinuity. More is different. But this is still a relatively binary conception with simply two sides of the discontinuity: one side of the discontinuity where the logic of linear growth prevails and the other where that of emergence or complexity prevails. There is a third order sophistication about scale where the binary view of second order thinking is still too simple; where the relationship between scale and properties is continuously variable.

As opposed to these simpler first and second order notions, continuously variable growth and development happen through many phases distinguished by differing logics or marked by different epiphenomena (something more like the imaginary graph above). As a system or network gains in members or in interactions, one set of behaviors becomes strained and unstable as it approaches the upper-bound threshold of the given logic. The threshold crossed, the previous logic gives way to a new logic. The pattern repeats, with the new logic holding for a period, but it too petering out in favor of a subsequent logic. And so on. Or perhaps there are tiers of controlling logics operative simultaneously. There may be meta-epi-phenomena as the various transitions admit a pattern of cycling logics or a second order logic to their or relations.6 No behavior or sequence is fundamental to systems, or the process of growth or to any particular phenomenon. We should dispense with the idea of a sin qua non behavior of systems in favor of a continuum of potentially many different behaviors as a system grows and changes.7

I will make a few examples to demonstrate the changing relation of prevailing logic and a system phenomena under growth.

-

Multiple logics. Consider that paradigmatic example of complexity: grains of sand falling onto a pile (a Bak-Tang-Wiesenfeld sandpile). As grains fall onto the pile, they tumble down the side, and come to a rest according to a standard distribution. Most come to a rest close to the drop point so the pile grows in height, but only a few roll all the way to the bottom so the base remains largely the same area resulting in the incline of the sides of the pile grow ever steeper. But there is a range of values for which the ratio of base to height is stable. When the pile reaches its upper bound of stability, the pile collapses. But it doesn’t collapse all the way to a layer of sand one grain thick. The collapse comes to a halt once the pile has reached the lower bound of the ratio of base to height (the lower bound being where the slope of the side of the pile is such that friction overcomes gravity and momentum). The pile recommences its growth in height. With a new, broader base having been established, it will achieve a greater height this time before reaching the critical threshold.

The pile doesn’t grow continuously until collapse though. It is constantly experiencing local collapse. The sand pile demonstrates scale symmetry in so far as there are slides of all sizes starting with a single grain previously stable coming lose and tumbling a little further down a side. Single grains frequently start a chain reaction leading to larger slides. The collapse of the whole pile isn’t an phenomenon of it’s own, unrelated to the logic of local slides, as the catastrophic collapse of the pile is usually the result of a local slide running away to the scale of the entire pile.

But fractal collapse isn’t a constant. Rather, its probability is a function of where the pile is in the growth-collapse cycle. At the lower bound of the range of stability any collapse is almost impossible. A single grain may break lose and tumble to a more stable location, but owing to the widespread state of stability, it is not likely to set off a chain reaction leading to a large slide. At the top end, catastrophic collapse approaches certainty. A growing proportion of sand grains are in unstable positions. More are likely to break lose and, having broken lose, collide with other unstable grains setting off chain reactions. As the pile goes through the positive phase of the growth-collapse cycle, it traverses a range of increasing collapse probability from almost impossible to almost certain and its fractal dimension grows.

Tally the sheer number of logics at work in this seemingly unnoteworthy phenomenon. The overall pattern is linear growth — the pile grows in mass in equal units in equal time. The shape of the pile demonstrates an oscillating pattern, between a steep cone and a shallow cone. The height of the pile superficially demonstrates cyclical growth. Upon closer inspection, the growth of the height of the pile turns out to be fractal. But even that fractal growth isn’t constant, but one of increasing fractal dimension.

-

A complicated interstitiary. A discontinuity is probably always preceded by some sort of turbulence, a breakdown in the previously prevailing relationship. A prevailing logic doesn’t yield instantaneously to its successor. In at least materials science this is well documented in the form of the microstructure of phase transitions.

As a social scientific example, nuclear weapons represent a discontinuity in the growth of military power, but how much of one? Countries had already acquired “city-busting” and potentially civilization destroying capability using the regular old Newtonian technologies of modern air power and chemical explosives. The U.S. imagined “bombing Vietnam back to the stone age” employing conventional methods. So it would seem that military power (technology) had already crossed an inflection point and gone non-linear in a way that was necessitating major strategic reconsideration prior to the discontinuity of nuclear weapons.

-

Many discontinuities. People have a tendency to conceptualize consciousness in the model of the binary discontinuity. Neural networks grow in size from tiny nerve bundles in nematode worms up to pre-hominids and then somewhere in the Pleistocene whamo, the discontinuity and consciousness and that’s the pinnacle. Some people imagine the difference between regular people and geniuses to be a little discontinuity, rather than a difference of quantity. Hence the obsession with Einstein’s brain, Descartes’s skull, and so on.

But I imagine consciousness rather than a big bang being a development with perhaps a number of discontinuities and plateaus — the first reflex, the first pain, the first internal world model, the first declarative memory, the first language of discrete symbols, the first syntax, the first theory of mind — on the way to humans. But our intelligence is just another plateau on the path. There is the singularity somewhere beyond us and still more discontinuities, unimaginable to us even beyond that. As intelligence is not one thing, I imagine each of the many faculties having its own trajectory, each with multiple discontinuities of their own, as well as the synergy of the many faculties being of uneven development and their interplay subject to system effects.8

Instead of relying on the sort of intuitionism that says something like “We know that there has been a discontinuity in strategic thought because we read Thomas Schelling now instead of Helmuth von Moltke” we should dispenses with this intuitionistic approach in favor of systematic mathematical methods to analyze change — to determine when and where system effects are at work, which ones are at work, the extent of their range, the exact location of inflection points or discontinuities, where the logic of a tipping point takes over and the pace of accelerating assertion of the logic of the new régime, to study turbulence, islands of stability, equilibria, feedback and other patterns that can develop.9

Notes

-

I am prone to write “collective intelligence” in place of “society,” but an ancillary point here is that all intelligence is collective (composite), whether it’s a society, so to speak, of individually dumb neurons in the brain of an individual or the more commonplace idea of a society, namely wisdom of crowds, invisible hand, social networking, superorganism type phenomena.

-

Though not necessarily so: we are learning how to harness emergent properties to our purposes, to design them in.

-

Though structure might be a concomitant emergent property, e.g. ecologies might exist in equilibrium, but that’s not because competition among species is well ordered.

-

This category error of misconstruing different component-composition behavior types, or not even identifying the second type at all, is perhaps illuminating to the overarching issue of intelligent design’s constant irreducible complexity mongering. One of the favorite analogies from their rhetoric is that of the mousetrap: remove a single component and it isn’t a slightly less effective mousetrap, it isn’t an anything and natural selection can’t function on constellations that display no function. But notice how this relies entirely on the machine type of component-composition thinking. But if one sees phenotypic expression as an emergent property of genotypic change, than one might shift to the later version of the component-composition model. A heavily determined trait will be stable over many changes in the underlying genes, until a critical threshold is reached at which time rapid change in phenotype (the emergent property) could occur. A perfect example of the contrast between the two types of thinking that I am describing here is that version of evolution as the long, slow accumulation of mutation down through the millennia, which sees a more or less consistent, linear change, versus something like punctuated equilibrium which imagines different logics of change predominating under different circumstances. I would go further to say that punctuated equilibrium is only a theory of discontinuity. A more elaborate theory of the deep history dynamics of evolution, or a marcotheory of evolution, as opposed to the microtheory we have at present, is required.

-

More is not binary, though more is frequently discrete — neural networks grow in whole neurons, societies grow in whole people. But not always. System happens as much in analog as in digital phenomena, e.g. strategy must adapt as power or capability increase, but power does not grow in discrete units.

-

Meta-epi-phenomena would be something observable only over extremely long timeframes for most phenomena with which we are familiar. Kondratiev cycles (45-60 years) and Forrester waves (200 years) would be examples from economics and sociology. The existence of such long patterns, whose period is outside the scope of our limited record, is part of the explanation for black swan type phenomena. I imagine that there are small models, probably in physics and biology, where the dynamcs of such systems could be worked out in detail, then recognized in longer term examples based on partial data. As the patterns of systems theory are largely substratum independent, patterns discovered in one area are relevant to another. A well organized sub-discipline would better allow for the abstraction of higher-order patterns from their original fields of discovery and facilitate interdisciplinary transmission of knowledge. A pattern well characterized in observations of one phenomenon might be revolutionary to another area where the phenomenon is insufficiently well observed to know the entire continuum of behavior, but observed well enough to recognize that it is an instance of the former pattern. Systems-type phenomena are present throughout the various sciences. Just as mathematics is used throughout the sciences, but is consolidated into a discipline of its own, so too systems science should become a better organized and consolidated sub-discipline. As it involves such a diverse range of the sciences, it would be an interdisciplinary field, not one as autonomous as mathematics.

-

While none fundamental, there are, presumably, a finite set of possible system patterns. I wrote on the possibility of cataloguing and organizing them on some sort of periodic table of logics in “Formal Cognition,” 1 January 2008.

-

Animals have developed a variety of different types and levels of intelligence; there’s no reason to think that machine intelligence will be any different.

-

The problem is that the data sets that we have aren’t big enough, granular enough and are riddled with bad data. With two points you can’t tell linear from geometric growth. With three points you can’t tell geometric growth from linear growth with noise. And of course it gets much worse than this. And we don’t even know how to operationalize a large segment of the really interesting phenomena. How would we quantify something as elusive as national power — something international relations theorists are trying to do all the time — when even the component statistics are a mess?