A few weeks ago I went to the most recent installment of the D.C. Future Salon. The presenter was the organizer, Ben Goertzel of Novamente and the subject was “Artificial Intelligence and the Semantic Web.” One of the dilemmas that Mr. Goertzel chased out with the notion of a semantic web is that the complexity is conserved: either it has to be in the software agent or it has to be in the content. If it is in the agent, then it can be highly centralized — a few geniuses develop some incredibly sophisticated agents — or it can be in the content in which case the distributed community of content providers all have to adequately mark-up their content in a way that more simple agents can process. Mr. Goertzel is hedging his bets: he is interested both in developing more sophisticated agents and in providing a systematic incentive to users to mark up their content.

In the course of discussing how to encourage users to mark up their own content, Mr. Goertzel listed as one of the problems that most lack the expertise to do so. “What portion of people are adept at formal cognition? One tenth of one percent? Even that?” I had probably herd the phrase or something like it before but for whatever reason, this time it leapt out. I had a collection of haphazard thoughts for which this seemed a fitting rubric and I was excited that this may have been a wheel for which no reinvention was required. When I got home I googled “formal cognition” figuring there would be a nice write-up of the concept on Wikipedia, but nothing. I fretted: formal cognition could simply mean machine cognition (computers are formal systems). Maybe it was a Goertzel colloquialism and the only idea behind it was a hazy notion that came together in that moment in Mr. Goertzel’s mind.

Anyway, I like the notion and absent an already systemized body of thought called formal cognition, here is what I wish it was.

While the majority might continue to think with their gut and their gonads, a certain, narrow, technocratic elite is in the process of assembling the complete catalog of formally cognitive concepts. There is the set that consists of all valid, irreducibly simple algorithms operative in the world along with their application rules covering range, structure of behavior, variants, proximate algorithms, compatibilities, transition rules, exceptions, et cetera. I am going to show my reductionist cards and say that given a complete set of such algorithms, all phenomena in the universe can be subsumed under one of these rules, or of a number acting in conjunction. In addition to there being a complete physis of the world, there is, underlying that, a complete logic of the world.

This reminds me of Kant’s maxim of practical reason that will is a kind of causality and that the free will is the will that is not determined by alien causes, that is, the will that acts according to its own principles, which are reason (see e.g. Groundwork of the Metaphysic of Morals, Part III). It seems to me that a project of delineating the principles of formal cognition is a liberating act insofar as we are casting out the innumerable unconscious inclinations of that dark netherworld of the intuition (gut and gonad), instilled as they were by millennia of survival pressures — the requirements for precision of which were considerably different from those of a modern technological people — in favor of consciously scrutinized and validated principles of thought.

By way of outstanding example, one might be prone to say that evolution is such a logic. At this point evolution has jumped the bank of its originating field of thought, the life sciences, and begun to infect fields far beyond its origin. It is increasingly recognized today that evolution through natural selection is a logical system, one of the fundamental algorithms of the world, of which the common conception of it as a process of life science is merely one instantiation. Perhaps it was only discovered there first because it is in life phenomena that its operation is most aggressive and obvious. But it is now recognized that any place where replication, deviation and competition are found, an evolutionary dynamic will arise. Some cosmologists even propose a fundamental cosmological role for it as some sort of multiverse evolution would mitigate the anthropic problem (that the universe is strangely tuned to the emergence of intelligent life).

However, note that evolution is a second order logic that arises in the presence of replication, deviation and competition. It would seem that evolution admits to further decomposition and that it is replication, deviation and competition that are fundamental algorithms for our catalog. But even these may be slight variations on still more fundamental algorithms. It strikes me that replication might just be a variant of cycle, related perhaps through something like class inheritance or, more mundanely, through composition (I feel unqualified to comment on identity or subspecies with algorithms because it is probably something that should be determined by the mathematical properties of algorithms).

System. But have I been wrong to stipulate irreducibly simplicity as one of the criteria for inclusion in our catalog? The algorithms in which we are interested are more complex than cycle. They are things like induction, slippery-slope, combinatorial optimization or multiplayer games with incomplete information. We have fundamental algorithms and second order or composite algorithms and a network of relations between them. Our catalogue of algorithms is structured.

The thing that I think of most here is Stephen Wolfram’s A New Kind of Science (complete text online | Amazon.com | Wikipedia) in which he describes a systematic catalog of enumerated algorithms, that is, there is an algorithm that could generate the entire catalog of algorithms, one after the other. These algorithms each generate certain complex patterns and as Mr. Wolfram suggests, the algorithms stand behind the phenomena of the material world.

An interesting aside lifted from the Wikipedia page: in his model science becomes a matching problem: rather than reverse engineering our theories from observation, once a phenomenon has been adequately characterized, we simply search the catalogue for the rules corresponding to the phenomenon at hand.

It seems to me that this catalog might be organized according to evolutionary principles. By way of example, I often find myself looking at some particularly swampy looking plant — this is Washington, D.C. — with an obviously symmetrical growth pattern — say, radial symmetry followed by bilateral symmetry, namely a star pattern of stems with rows of leaves down each side. Think of a fern. Then I see a more modern plant such as a deciduous tree, whose branch growth pattern seems to follow more of a scale symmetry pattern. The fern-like plants look primitive, whereas the deciduous branch patterns look more complex. And one followed the other on the evolutionary trajectory. The fern pattern was one of the first plant structures to emerge following unstructured algae and very simple filament structure moss. The branching patterns of deciduous trees didn’t come along until much later. There are even early trees like the palm tree that are simple a fern thrust up into the air. The reason that fern-like plants predate deciduous trees has to do with the arrangement of logical space. A heuristic traversing logical space encounters the algorithm giving rise to the radial symmetry pattern before it does that of scale symmetry. The heuristic would work the same whether it was encoded in DNA or in binary or any other instantiation you happen to think of.

A fantastical symmetry. I’m going to allow myself a completely fantastical aside here — but what are blogs for?

It is slightly problematic to organize the catalogue on evolutionary principles insofar as they are logical principles and sprung into existence along with space and time. Or perhaps they are somehow more fundamental than the universe itself (see e.g. Leibniz) — it is best to avoid the question of whence logic lest one wander off into all sorts of baseless metaphysical speculation. Whatever the case, biological evolution comes onto the scene relatively late in cosmological time. It would seem that the organizing principle of the catalogue would have to be more fundamental than some latter-day epiphenomena of organic chemistry.

Perhaps the entire network of logic sprung into existence within the realm of possibility all at once, though the emergence of existent phenomena instantiating each rule may have traversed a specific, stepwise path through the catalogue only later. But there isn’t a straightforward, linear trajectory of simple all the way up to the pinnacle of complexity, but rather an iterative process whereby one medium of evolution advances the program of the instantiation of the possible as far as that particular medium is capable before its potential is exhausted. But just as the limits of its possibilities are reached, it gives way to a new medium that instantiates a new evolutionary cycle. The new evolutionary cycle doesn’t pick up where the previous medium left off, but start all the way from zero. Like in Ptolemy’s astronomy there are epicycles and retrograde motion. But the new medium has greater potential then its progenitor and so will advance further before it too eventually runs up against the limits of its potential. So cosmological evolution was only able to produce phenomena as complex as, say, fluid dynamics. But this gave rise to star systems and planets. The geology of the rocky planets has manifest a larger number of patterns, but most importantly life and the most aggressive manifestation of the catalog of algorithms to date, biological evolution. As has been observed, the most complexly structured three pounds of matter in the known universe is the human brain that everyone carries around in their head.

If life-based evolution has proceeded so rapidly and demonstrated so much potential, it is owing to the suppleness of biology. However, the limits of human potential are already within sight and a new, far more dexterous being, even more hungry to bend matter to logic than biological life ever was has emerged on the scene: namely the Turing machine, or the computer. This monster of reason is far faster, more fluid and polymorphous, adaptable, durable and precise than us carbon creatures. In a comparable compression of time from cosmos to geology and geology to life, the computer will “climb mount improbable,” outstrip its progenitor and explore further bounds of the catalog of logic. One can even imagine a further iteration of this cycle whereby whatever beings of information we bequeath to the process of reason becoming real repeat the cycle: they too reach their limits but give rise to some even more advanced thing capable of instantiating as yet unimagined corners of the catalogue of potential logics.

But there is a symmetry between each instantiation of evolution whereby the system of algorithms was traversed in the same order and along the same pathways. Perhaps more than the algorithms themselves are universal, perhaps the network whereby they are related is as well. That is to say that perhaps there is an inherent organizing structure within the algorithms themselves, a natural ordering running from simple to complex. Evolution is not the principle by which the catalog is organized, but merely a heuristic algorithm that traverses this network according to that organizing principle. Evolution doesn’t organize the catalog, but its operation illuminates the organization of the catalog. Perhaps that is what makes evolution seem so fundamental: that whatever it’s particular instantiation, it is like running water that flows across a territory defined by the catalogue. Again and again in each new instantiation evolution re-traverses the catalogue. First it did so in energy and matter, then in DNA, then in steel and silicon, now in information.

Anti-System. This is fantastical because, among other reasons, it is well observed that people who are captivated by ideas are all Platonists at heart. I have assiduously been avoiding referring to the algorithms of a system of formal cognition as forms. It all begs the question of whence logic — which, again, is a terrible question.

Of course the notion of formal cognition doesn’t need to be as systematic as what I have laid out so far. Merely a large, unsystematized collection of logically valid methods along with the relevant observations about the limitations, application rules and behaviors of each one would go a significant way toward more reliable reasoning. Perhaps such a thing doesn’t exist at all — I tend towards a certain nominalism, anti-foundationalism and relativism. But the notion of a complete logical space, or a systematic catalog is perhaps like one of Kant’s transcendental illusions — a complete science or moral perfection — the telos, actually attainable or only fantasized, that lures on a certain human endeavor.

Politics. All of this having been said, I remain of the belief that politics is the queen of the sciences. Formal cognition wouldn’t be automated decision making and it could only ever enter into political dialog as decision support or as rhetoric.

As Kant wrote, “Thoughts without content are empty; intuitions without concepts are blind” (Critique of Pure Reason, A51 / B75). Kant developed an idiosyncratic terminology and perhaps another way of phrasing this, more suited to my purpose here, would be to say that formal reason absent empirical data is empty; but that empirical data unsystemized by conceptual apparatus is an incoherent mess. A complete system of the world cannot be worked out a priori and a mere catalogue of all observations about the world would be worse than useless.

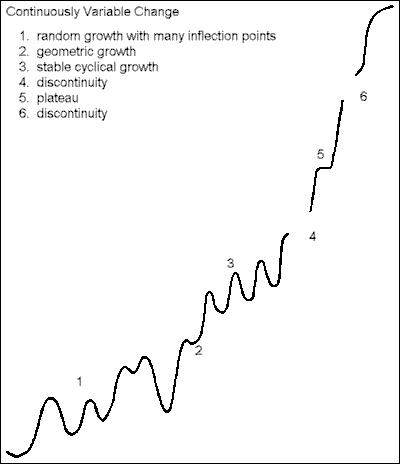

Formally cognitive methods must be brought to bear. And against a complex and messy world I do not think that their application will be unproblematic. In passing above, I mentioned the notion of application rules. Each algorithm has attendant rules regarding when it comes into force, for what range of phenomena it is applicable, when it segues to another applicable algorithm, et cetera. Take for instance the notion of the slippery-slope or the snowball. Not all slippery-slopes run all the way to the bottom. Most are punctuated by points of stability along the way, each with its own internal logic as to when some threshold is overcome and the logic of the slipper-slope resumes once more. Or perhaps some slippery-slope may be imagined to run all the way to the bottom — it’s not ruled out by the logic of the situation — but for some empirical reason in fact does not. Once the principles of formal cognition come up against the formidable empirical world, much disputation will ensue.

Then there is the question of different valuation. Two parties entering into a negotiation subscribe to two (or possibly many, many more) different systems of valuation. Even when all parties are in agreement about methods and facts, they place different weights on the various outcomes and bargaining positions on the table. One can imagine formally cognitive methods having a pedagogic effect and causing a convergence of values over time — insofar as values are a peculiar type of conclusion that we draw from experience or social positionality — but the problems of different valuation cannot be quickly evaporated. One might say that the possibly fundamental algorithm of trade-off operating over different systems of valuation goes a long way toward a definition of politics.

Finally, one could hope that an increased use and awareness of formally cognitive methods might have a normative effect on society, bringing an increased proportion of the citizenry into the fold. But I imagine that a majority of people will always remain fickle and quixotic. Right reasoning can always simply be ignored by free agents — as the last seven years of the administration of George W. Bush, famous devotee of the cult of the gut as he is, have amply demonstrated. As an elitist, I am going to say that the bifurcation between an illuminati and the rabble — as well as the historical swings in power between the two — is probably a permanent fixture of the human condition. In short, there will be no panacea for the mess of human affairs. The problem of politics can never be solved, only negated.